Artificial intelligence and machine learning, once concepts are now around us at every corner from digital wallet transactions to voice recognition from Alexa. With that said, there are certain steps or tactics that may need to be enforced on AI/ML systems to protect them from machine learning attack vulnerabilities, resulting in ‘Trusted AI’ solutions.

Research teams at the Berryville Institute of Machine Learning[1] classified ML attacks into 2 categories each for 3 aspects of machine-learning models, which gives rise to a total of 6 attack vector types.

The Attack Categories

1) Extraction attacks – compromise confidentiality by stealing data and information from systems.

2) Manipulation attacks – compromise integrity by modifying learning data feeds and misleading the ML system to give undesirable outcomes.

The surfaces of an ML system

1) Model – the machine learning model / AI model implemented

2) Data – test and live data used for training and consequent execution of the system

3) Input – runtime or real-time input data

Therefore, 2 attack categories X 3 types of ML surfaces = 6 attack systems

- Input Extraction

- Data Extraction

- Model Extraction

- Input Manipulation

- Data Manipulation

- Model Manipulation

A strong security solution will address these attack vectors at a minimum to protect AI/ML applications.

Applying security gap analysis techniques to protect an AI/ML system

This section will explore how the 2 X 3 = 6 machine learning attack vectors listed previously in section 2.0 can be covered by Cybersecurity techniques implemented by Def-Logix.

Input Manipulation – In this attack vector, the input to the ML system is intercepted, modified or directly replaced to coerce outcome desired by the malicious attacker.

Examples of these attack vectors are sending SPAM email marked as non-SPAM, which possible has elements to extract email addresses.

Red-team test-attack input => Production ML System => unexpected runtime output ==> exposes vulnerabilitye.g. marking/sending a SPAM email as a non-SPAM email => may have a malicious program that captures target email address, classifying a STOP sign as a speed-limit sign => vehicle slows down but does not stop.

Red-Team ACTION: Design Test Cases to serve as the red-team attack

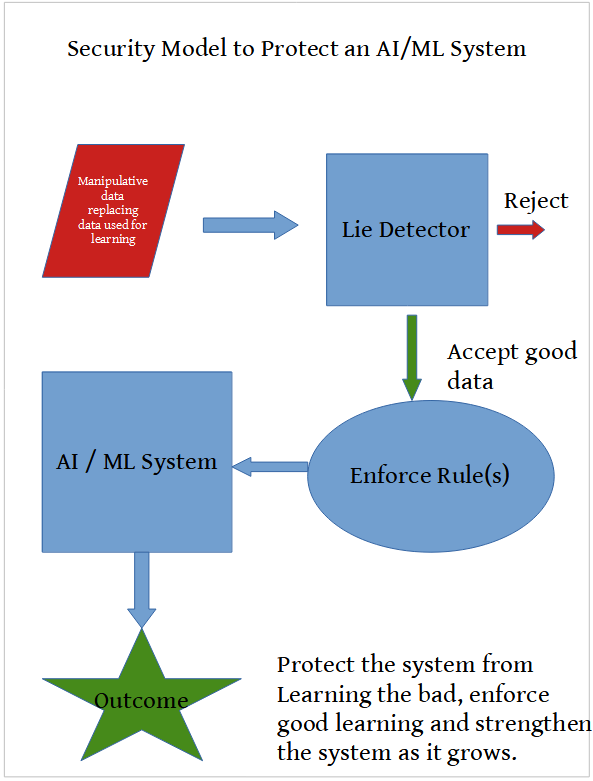

Data Manipulation – called poisoning. The red-team test-attack system sends “bad” data to the ML system and misleads the ML to learn bad things as good, thereby modifying the behavior of the ML system and misdirects the pattern learning. e.g. manipulative data => financial or weather forecast ML model => unexpected behavior => modification to pattern learned => erroneous misleading forecast.

Red-Team ACTION: Design Test Cases to generate bad data feed for test-attack on forecasting ML system to modify its behavior and outcome.

Blue-Team ACTION: Design rules to monitor incoming data, then implement those rules in the security system that will protect the ML system both before and at run-time.

Model Manipulation – It is common in the machine and deep learning community to share or publish open-source white-box models that can be reused (code reuse) or adopted, learned, by other systems in the community. A malicious attacker can share or publish a model that misleads and hampers machine learning taking systems in wrong directions.

While the red team can probe for authenticity of ML models adopted by the development team, a rule-based security tool such as our very own Entrap can monitor functions, methods and callbacks made via the OS at run-time to identify malicious modeling.

Input Extraction – To protect AI/ML systems from this attack vector, the security tool must strive to protect the source / entry-point and medium of input fed to the ML system, via targeted rules. Security violation of input feed can be identified by intercepting system API calls or by monitoring the file system.

Data Extraction – To protect ML systems from this attack vector, robust rules to prevent access to the training data fed to the ML model must be built.

Model Extraction This is an attack on the ML model itself. A partially white-box model can be attacked to “see”, copy, or extract its parameters and behavior patterns. The model can be protected at 2 levels. First, avoid white-box models to avoid access if possible. If one must use open-source model code within their custom models, the outer ML system should be closed and secured.

Strong passwords, password-protected web portals, desktop and embedded applications, use of industry-established authentication modules for two-tier or multi-tier authentication, active directory lookup and other such security measures should be used to harden the privacy wall around the system.

Def-Logix security portfolio and product line can help assist in AI/ML systems from being compromised and misused

Cybersecurity teams at Def-Logix have been working diligently to create a product cycle that includes Shimmix, Entrap and Security Enhanced System framework that are rule-driven, flexible, parameterized and generic enough to be customized or tailored to the target AI/ML application being developed.

While the security enhancing tool that will protect the ML system from vulnerabilities can be unique due to the ML domain, it can inherit some simple techniques from this existing portfolio of products as well as bundle or pair the products on the target platform.

Lastly there is DevSecOps: Secure Agile CI/CD of AI/ML systems

DevSecOps i.e. secure development operations can add another layer to prevent vulnerabilities and holes in the continuous deployment process.

Some DevSecOps techniques can be:

- Code scanning for holes, vulnerabilities

- Penetration testing a.k.a. “Red-team attacks” of product, tools, services, Web services, on the organization’s premises (corporate network) and in the public cloud.

- Leveraging and integrating 3rd party DevSecOps tools

Much thanks to Def-Logix very own Leena Kudalkar on contributing much of the vital research.

References

[1] Berryville Institute of Machine Learning https://berryvilleiml.com

“Cybersecurity teams at Def-Logix have been working diligently to create a product cycle that includes Shimmix, Entrap and Security Enhanced System framework that are rule-driven, flexible, parameterized and generic enough to be customized or tailored to the target AI/ML application being developed. ”